Practices

Three often overlooked considerations when designing the sample plan for an online survey

Three considerations related to survey sampling are often overlooked:

This concept is best described through three questions:

Rule of thumb for basic surveys, using descriptive analytics, is a sample size of n = 100 – 150 for each sub-group that you want to analyze discretely. However, when using advanced statistical analyses, the “n” requirements of your sample will often increase to many more than that. Each analysis technique requires a different sample size to achieve statistical significance. Can you access the number of completes to make your quant. results sound?

This can become a factor where the opinions and experiences of the sample could change, making the respondents who completed the survey first materially different from those who complete it later. For subjects which are stable, say paper products, longer field times are fine. For other subjects, like high profile public figures, opinions literally could change overnight.

This is the payment or compensation a respondent will receive for completing a survey, plus any management costs associated with securing the survey sample. Generally speaking, the harder it is to find and/or access a respondent, the more expensive their CPI fee will be. Costs for survey sample can range from zero to a couple hundred dollars per interview, dependent on the respondent criteria you've defined for your survey. When you scale a CPI fee to 100+ people, sample costs can get steep quick.

Are the people that you desire to take your survey actually taking your survey? The criticality of this sample quality question takes center stage when using outside sample sources.

Fraudulent respondents are real. Therefore, you must take care to build a number of “mouse traps” into your survey to catch them. This means designing screening questions into your survey to determine if the person you are interviewing possesses the characteristics you need to have valid data from the survey. When creating this screener, you want to avoid yes/no or leading questions. You want to ask questions in such a way that only a legitimate respondent should be able to answer the questions correctly. And, where appropriate, you want to add in “foils.”

What do I mean by “foil”? Let’s say you seek to connect with cosmetic users. You might throw a fake brand into a list of brands when asking a potential respondent, “Which of the following brands of cosmetics have you used in the past 6 months?” If the respondent selects the fake brand, they will be disqualified from the survey.

This refers to who is represented in your survey sample. When creating your sample plan, you are making choices on things like quotas, complete minimums or maximums, or mixes. A study’s analysis plan will lead the definition of a study’s sample make-up.

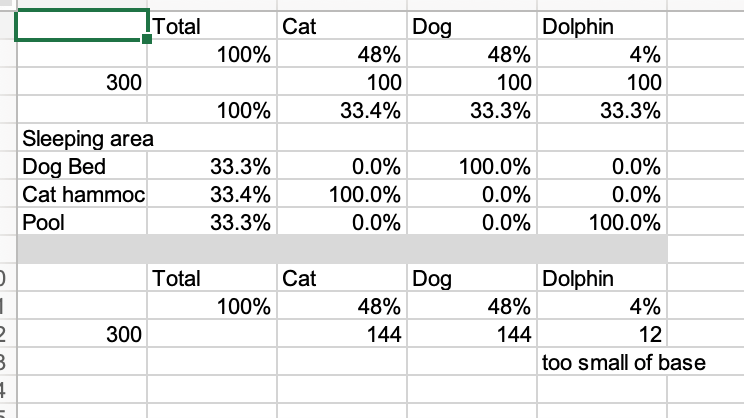

For example, let’s assume a pet supply manufacturer wants to learn more about the housing preferences that pet owners have for three different types of pets — dogs, cats, and dolphins.

If you want to be able to perform subgroup statistical analysis between the dog owners, cat owners, and dolphin owners, then you will want a minimum of n = 300 respondents in the study (n = 100 dog owners, n = 100 cat owners, and n = 100 dolphin owners).

Yet, it’s important to note that this overall sample make up may not be reflective of pet ownership in the market of study. Let’s assume that 48% of pet owners have a dog, 48% own a cat, and 4% own a dolphin.

If we sampled based on representation, we would have n = 144 cat and dog owners each and only n = 12 dolphin owners, much too small to confidently stat test dolphin owner preferences against those of cat and dog owners.

This nuance around sample make-up becomes a problem when you want to be able to report survey results at a total sample level. If we use the total from the evenly split sample, we may conclude there is an amazing high interest in salt water pools. While I may have a very talented cat (Loki), I do not think he would have much interest in having to put on a wet suite to take a nap.

While this is a humorous example, this happens often if a client insists on having say evenly split samples on things like sex/gender, age, education level, or other characteristics which do not conform to the profiles of the population being studied.

Bottom line, pulling together a survey that will address your marketing management questions is not a trivial task. It takes some upfront planning and vigilance to ensure that you are structuring your sample make up to smartly achieve — in a feasible and accurate manner — your project goals.

Rob Maihofer is Sylver's Senior Market Research Analyst. He is responsible for consulting with Sylver Consulting Project Managers on execution of marketing research projects, especially in the areas of marketing management questions, target audience identification and recruitment, analysis plans, and presentation options.

Rob has a BA from Michigan State University and MBA from University of Illinois at Chicago, and many continuing education programs from the Burke Institute.

Practicing "Power With" leadership at Sylver Consulting

5 Accessibility Factors to Consider when Designing Digital User Experiences

Reach out to set up a free discovery call. On this call, we’ll get clear on your scope of work to be tackled, how your initiative ladders to a broader business goal of your organization, and assess — without attachment — if Sylver Consulting is a “best fit partner” to support you in your scope of work.